The Data Sovereignty Question European Companies Can’t Ignore

71% of organizations cite cross-border data transfer compliance as their top regulatory challenge. For European companies, this isn’t abstract.

Europe has issued over EUR 6.7 billion in GDPR fines since 2018. The regulators aren’t bluffing. And the trend is accelerating: 2025 alone accounted for EUR 2.3 billion, a 38% year-over-year increase.

When your AI processes customer data, employee records, or business-sensitive information through a US cloud provider, you’re introducing compliance risk. For some companies, that risk is acceptable. For others, it’s a dealbreaker.

When On-Premise Makes Sense

Not every company needs on-premise AI. Cloud APIs from OpenAI, Anthropic, or Google work fine for non-sensitive applications. Content generation, internal tooling, general analysis.

On-premise becomes the right call in three situations.

You process personal data under GDPR and need to guarantee it never leaves EU jurisdiction. Data processing agreements with cloud providers help, but they don’t eliminate the risk of third-country data access.

You work in a regulated industry (healthcare, finance, legal, government) with specific data handling requirements that cloud providers can’t satisfy.

You process proprietary business data (trade secrets, unreleased product designs, competitive intelligence) that you don’t want on someone else’s servers. Period.

The Technical Options

On-premise AI isn’t one thing. It’s a spectrum of deployment options with different trade-offs.

Self-hosted open-source models (Llama 3, Mistral, DeepSeek V3) give you the most control. You run the model on your own hardware or private cloud. No data leaves your network. No third-party dependencies.

DeepSeek V3 scores within a few percentage points of GPT-4o on most benchmarks at dramatically lower cost. Open-source models have closed the quality gap significantly.

EU cloud providers (OVHcloud, Hetzner, IONOS, Open Telekom Cloud) offer a middle ground. Your data stays in EU data centers under EU jurisdiction, but you don’t manage the hardware yourself.

Private cloud deployments on AWS or Azure with EU region restrictions keep data within EU borders while using familiar infrastructure. This satisfies many compliance requirements but still involves a US provider.

Cost Comparison

Cloud API costs scale linearly with usage. GPT-4o costs roughly EUR 2-10 per million tokens. At high volume, this adds up fast.

Self-hosted models have higher upfront infrastructure cost but near-zero per-query marginal cost. A capable GPU setup (A100 or equivalent) runs EUR 500-2,000/month for hosting.

The breakpoint: at roughly 50,000 queries per month, self-hosted starts becoming cheaper than cloud APIs. Below that, cloud is simpler and more cost-effective.

Here’s the trade-off most companies miss. Self-hosting requires operational expertise: GPU management, model updates, monitoring, scaling. If you don’t have that in-house, the management cost erodes the savings.

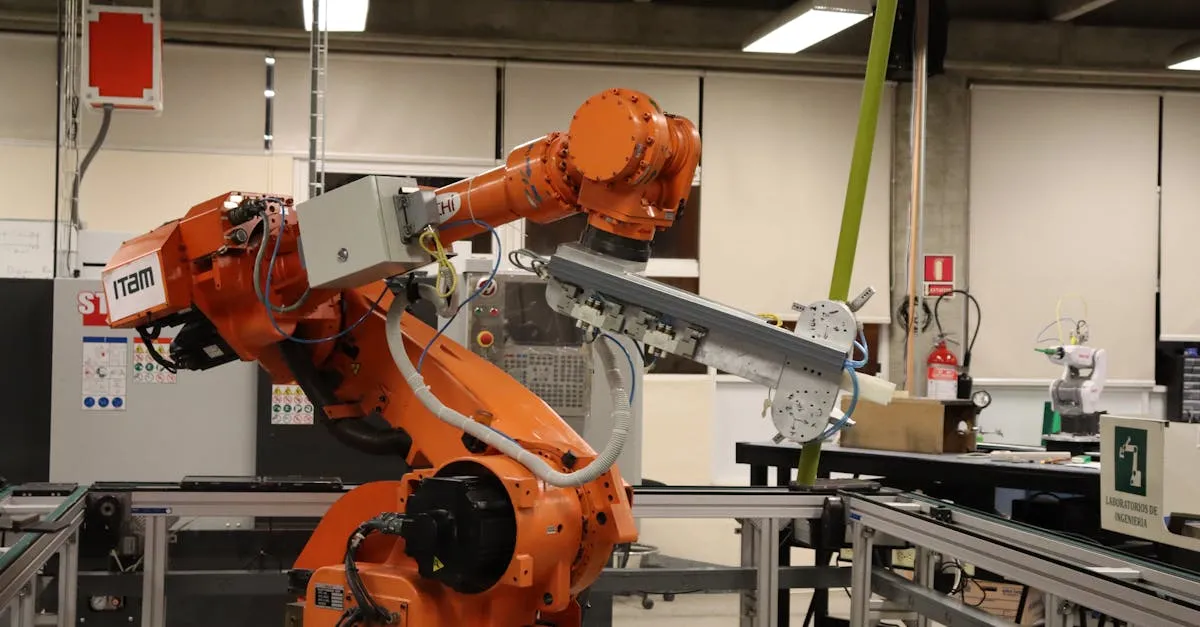

Architecture for Privacy-First AI

The architecture mirrors cloud-based systems with one key difference: your data never leaves your controlled environment.

Your document processing pipeline runs on-premise. OCR, extraction, and validation happen within your network. Results flow to your internal systems via private APIs.

Your RAG system uses a locally hosted vector database (Weaviate, Qdrant, or Milvus) and a locally running LLM. Employee queries and internal documents stay entirely within your infrastructure.

Your support triage system processes customer tickets locally. No customer data touches external APIs.

The EU AI Act adds another layer. Full application starts August 2026. On-premise deployment gives you direct control over audit trails, model behavior documentation, and human oversight mechanisms that the Act requires.

The Hybrid Approach

Most European companies don’t go fully on-premise. They use a hybrid model.

Sensitive data processing (customer PII, financial records, employee data) runs on-premise or on EU-only infrastructure. Non-sensitive AI applications (content generation, code assistance, general research) use cloud APIs.

This gives you compliance where it matters and convenience where it doesn’t. The architecture uses a routing layer that classifies data sensitivity and directs queries to the appropriate processing environment.

One financial services client runs all customer-facing AI on-premise while using cloud APIs for internal productivity tools. The split keeps compliance auditors happy without sacrificing the team’s access to the latest models.

Migration Path

If you’re currently using cloud AI and need to move on-premise, don’t do it all at once.

Start with your most sensitive workload. Build the on-premise infrastructure for that one use case. Validate performance and reliability against your cloud baseline.

Then migrate additional workloads one at a time. Each migration provides learnings that make the next one smoother.

The common mistake: trying to replicate your entire cloud AI stack on-premise in one project. That’s a recipe for delays, cost overruns, and frustrated teams.

For the broader perspective on AI integration architecture, read our AI workflow integration guide. And for cost considerations, our AI integration cost guide covers both cloud and on-premise scenarios.

Need AI that keeps your data under your control? Let’s architect a privacy-first solution. We’ll design an on-premise or EU-hosted deployment that meets your compliance requirements without sacrificing capability.